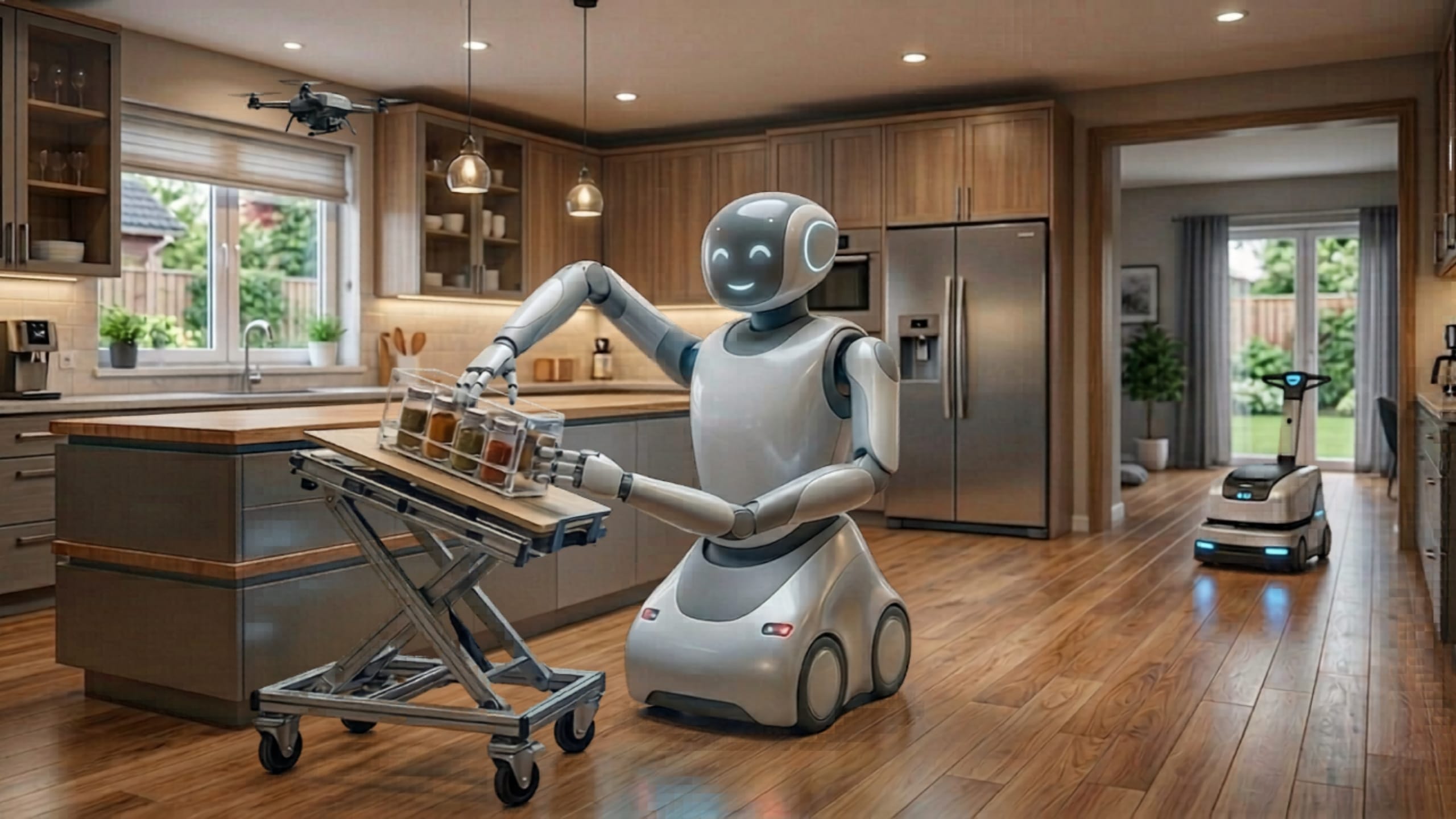

LOS ANGELES – The landscape of artificial intelligence is currently undergoing a foundational shift, moving beyond the digital confines of "bits and bytes" and entering the tangible world of atoms. This emergence of Physical AI represents a departure from the traditional robotics that have dominated industrial sectors for decades. While classic robots rely on rigid, rule-based programming to operate within highly controlled, engineered environments, the new generation of physical agents is being designed to perceive, reason about, and adapt to the inherent unpredictability of the real world. This transition marks the beginning of an era where machines no longer just execute commands but understand the physical context of their surroundings.

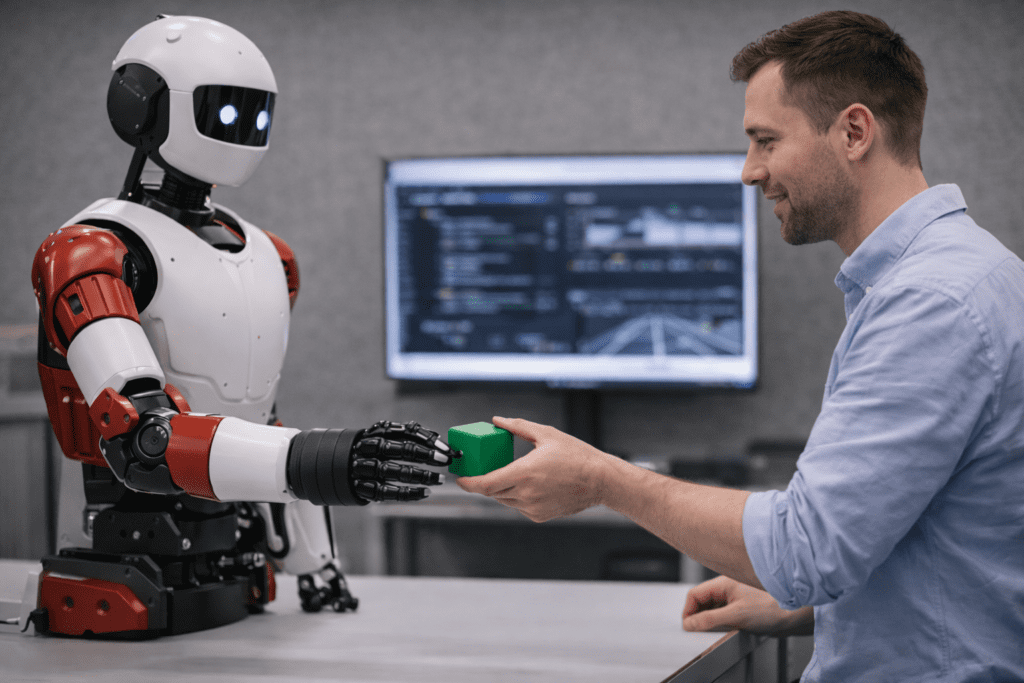

Several technological pillars are converging to make this leap possible, most notably the development of Vision-Language-Action (VLA) models. Unlike previous iterations of machine learning that specialized in a single task, VLA models provide robots with a generalist’s understanding of the world. By integrating visual perception with linguistic reasoning, these machines can interpret complex instructions and apply a generalized knowledge of physics to novel situations. For example, a robot equipped with a VLA model does not need to be specifically programmed for every individual object it encounters; instead, it uses its underlying understanding of object manipulation and spatial relationships to navigate unfamiliar tasks.

Supporting these VLA models is the rise of large-scale foundation models. Much like the large language models that revolutionized digital communication, foundation models for robotics are trained on massive, diverse datasets. This large-scale training allows robots to move beyond simple, scripted movements, granting them the ability to handle a vast array of tasks with a level of nuance previously reserved for human operators. This "general intelligence" for the physical world is further accelerated by dramatic advancements in compute power. The modern GPU has become the engine of this revolution, providing the processing speeds necessary to train high-fidelity models that can simulate the complexities of physical interaction in real-time.

Related article - Uphorial Shopify

However, transitioning an AI from a digital brain to a physical body presents a unique set of challenges, primarily known as the "sim-to-real gap." Because training a robot in the real world is slow, expensive, and potentially dangerous, developers rely on sophisticated simulated environments. To ensure that a robot’s digital training translates to real-world success, engineers utilize a technique called Domain Randomization. By varying the conditions within a simulation—such as changing the lighting, shifting the orientation of parts, or altering surface friction—developers force the AI to become resilient. This prevents the robot from "memorizing" a specific environment and instead encourages it to learn the underlying principles of the task, preparing it for the chaotic variables of a warehouse or a household.

The primary method of teaching these agents within their digital playgrounds is Reinforcement Learning (RL). This is a trial-and-error approach where the robot is given a goal and left to discover the best way to achieve it. When the robot succeeds, it receives a numerical reward; when it fails, it receives none. Over millions of iterations, the AI refines its behavior, discovering optimal movements and strategies that a human programmer might never have conceived. This autonomous learning process is what allows Physical AI to develop the fluid, adaptive movements necessary for navigating messy environments.

The final piece of the Physical AI puzzle is the continuous feedback loop between the digital and physical realms. Bridging the sim-to-real gap is not a one-time event but an iterative journey. Developers regularly capture data from a robot’s performance in the real world, identifying where the simulation failed to account for a specific physical nuance. This data is then fed back into the simulated environment to refine the training models further. This constant cycle of simulation, real-world testing, and data-driven refinement ensures that the AI becomes increasingly reliable and capable of handling complex "unknown unknowns."

As Physical AI continues to mature in 2026, the implications for industry and daily life are profound. We are moving toward a future where robots are no longer confined to safety cages on factory floors but are capable of working alongside humans in dynamic spaces. By shifting the focus from bits to atoms, researchers are finally giving AI a body that is as capable as its mind. The result is a new class of intelligent machines that do not just exist in the world, but truly interact with it, marking one of the most significant milestones in the history of human innovation.