MIT UNIVERSITY — The development of modern computing is frequently characterized as a sudden, mid-twentieth-century rupture in human history—a digital revolution born in the laboratories of the 1940s. However, in a sweeping historical analysis, Dr. David Alan Grier argues that the origins of our algorithmic age are deeply rooted in the soil of the Industrial Revolution. In a comprehensive discussion on the evolution of technology, Grier posits that contemporary computing is not merely a technological phenomenon but a direct continuation of industrial processes aimed at systematization, standardization, and the division of labor. By examining the arc from steam power to silicon, Grier reveals that the blueprint for the smartphone in our pockets was actually drafted centuries ago in the quest for industrial efficiency.

The industrial roots of computing began long before the invention of the vacuum tube. Grier highlights how early scientific endeavors necessitated a radical reorganization of human thought. A primary example is the tracking of Halley’s Comet in the 18th century. Calculating the return of the comet was a task too vast for any single mind; it required the creation of "human computers"—individuals assigned to perform specific, repetitive mathematical tasks within a larger, structured system. This methodology applied the same division of labor found in pin factories to the realm of mathematics. By breaking complex scientific inquiries into repeatable, discrete steps, early thinkers created a human-based processing unit. This structural methodology eventually transitioned into mechanical systems and, finally, the electronic computers we recognize today, suggesting that the "computer" was a job description long before it was a machine.

This transition from human labor to mechanical output was driven by a cultural obsession with standardization. Grier identifies a pervasive drive for uniformity that began with Adam Smith’s The Wealth of Nations and moved through various sectors of society. This culture of standardization was championed by figures like Herbert Hoover, whose engineering focus sought to bring order to chaotic systems, and was codified in the Flexner Report, which standardized medical education in North America. These efforts created a world where data could be categorized and processed at scale. Without this social and professional infrastructure of uniformity, the computational methods of the 20th century would have had no framework upon which to grow. Computing, in this sense, was the natural output of a society that had already decided that every process could—and should—be measured and standardized.

As these standardized systems matured, they began to transform how societies viewed the human experience. Grier details how the advent of telegraphy and the automation of the national census fundamentally changed data collection. For the first time, populations were no longer just groups of citizens; they were datasets. The ability to process large-scale information allowed governments and corporations to view society through a lens of statistical probability. This shift led to a world where data processing began to define the boundaries of the human experience, moving from counting people to predicting their behaviors. The census, in particular, acted as a catalyst for the development of tabulating machines, which served as the direct mechanical ancestors of the modern digital processor.

Related article - Uphorial Shopify

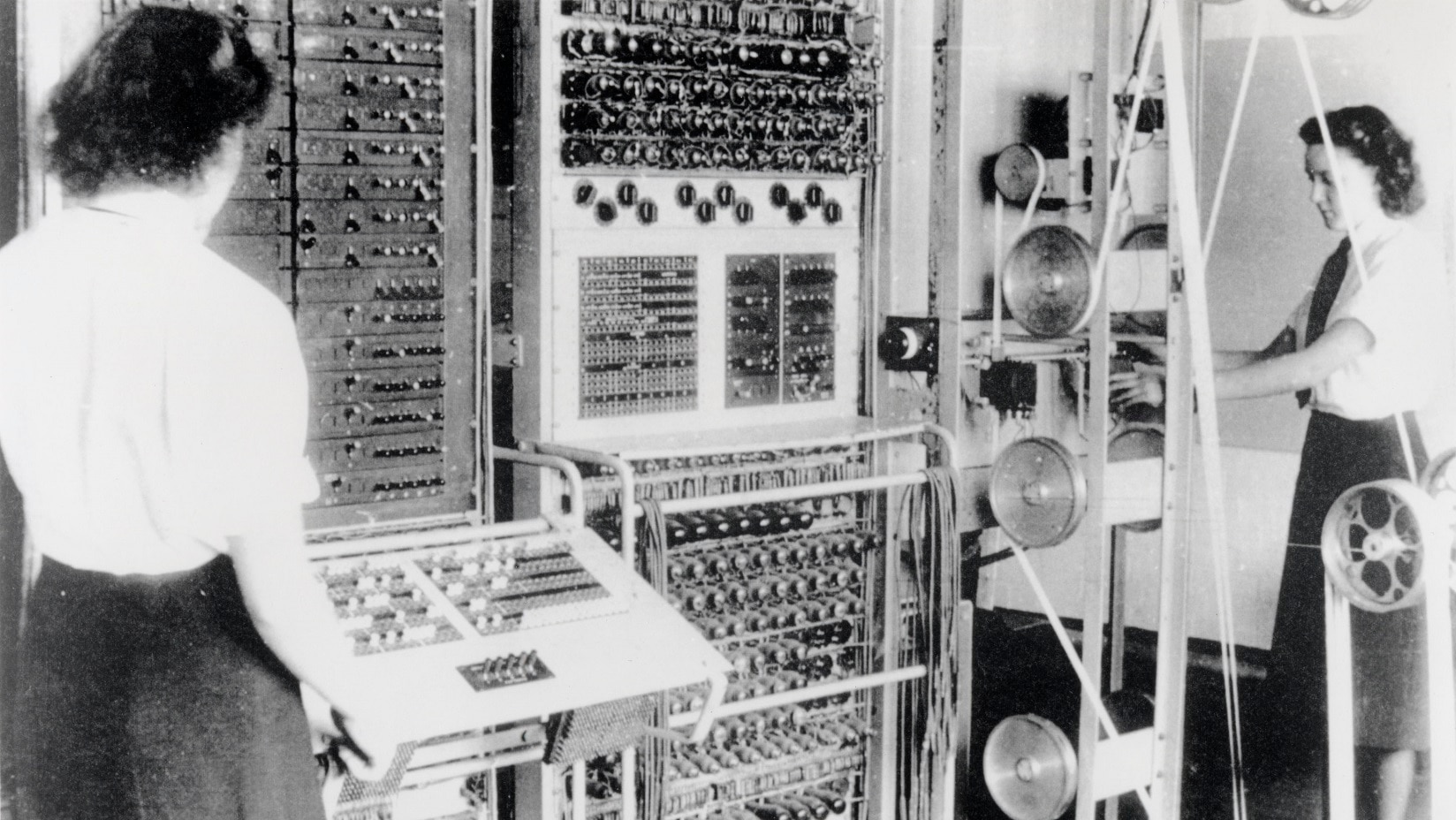

The physical architecture of the technology we use today was eventually solidified in the 1940s, a decade Grier views as the "crystallization" of the industrial computing narrative. It was during this period that figures like John von Neumann and the engineers behind the ENIAC (Electronic Numerical Integrator and Computer) established the fundamental blueprint for the modern computer: a system comprising memory, a processing unit, and an instruction decoder. While the hardware has shrunk from the size of a room to the size of a thumbnail, this underlying logic remains largely unchanged. It is a design that prioritizes the same industrial goals of the 1800s—speed, reliability, and the ability to execute complex instructions through a series of simple, standardized steps.

A significant portion of Grier’s analysis focuses on the historical and ongoing tension between machines and human labor. This friction began when mechanical devices first replaced human "computers" and has evolved into modern anxieties regarding data ownership and the rise of Artificial Intelligence. Grier notes that each wave of innovation brings a renewed debate over who owns the output of a machine and what the value of human intuition is in an automated world. This struggle is not a new byproduct of the internet age but a recurring theme of the industrial era. The substitution of a person for a mechanical gear in the 19th century is the same narrative arc we see today when an algorithm replaces a human analyst.

Ultimately, Grier concludes that the current fervor surrounding AI tools is part of a long-standing "U-shaped" narrative of innovation. This pattern typically involves a period of high expectation, followed by a dip as the limitations of the technology become apparent, and a slow recovery as the tool is properly integrated into society. He cautions that while today’s AI is undeniably impressive, it is not infallible. Grier emphasizes that as we move further into an algorithmic age, the importance of human accountability becomes paramount. We cannot, he warns, outsource our moral or professional responsibility to machines that are, at their core, simply the latest and most sophisticated iteration of the industrial assembly line. To understand where our digital future is going, we must first recognize that we are still living through the long, complex afternoon of the Industrial Revolution.